AI trained on conducting medical interviews has the ability to match, or even surpass some human doctors when it comes to bedside manner.

This is according to the latest study by computer scientists at Cornell University. However, it is worth noting the study was performed in collaboration with Google DeepMind researchers and is yet to be peer-reviewed.

Google AI vs. doctors

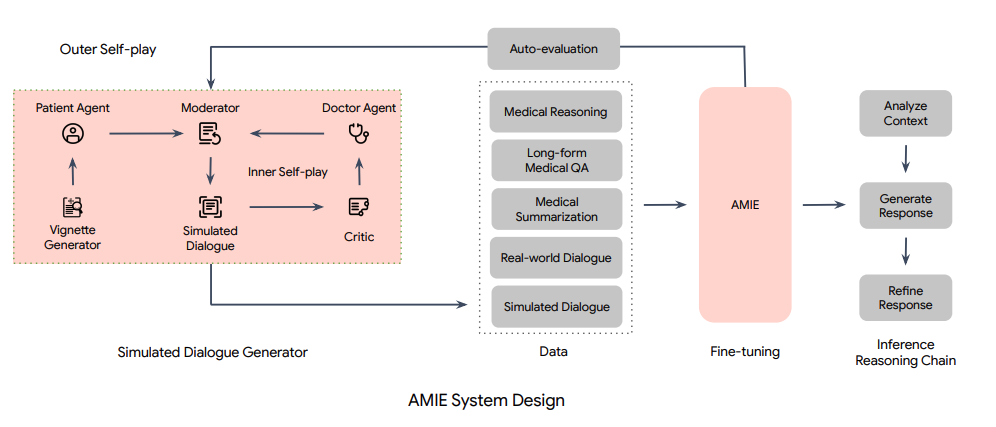

Researchers first built a large language model (LLM) based on Google called the ‘Articulate Medical Intelligence Explorer’ (AMIE).

Next, they designed a framework for evaluating “clinically meaningful axes of performance”, including “history taking, diagnostic accuracy, management reasoning, communication skills, and empathy”. In other words, AMIE was evaluated in these categories to see if it possessed adequate medical examination and interview skills.

Researchers then recruited 20 participants “who have been trained to impersonate patients”, and got them to have online consultations via chat with AMIE and 20 human clinicians. The participants were not informed if they’re chatting with AMIE or with a human clinician.

A total of 149 patient scenarios were enacted. AMIE’s and the human clinicians’ performance were evaluated. The participants were also asked to rate their experiences.

According to Nature, research scientists at Google Health claim this study denotes “the first time that a conversational AI system has ever been designed optimally for diagnostic dialogue and taking clinical history.”

Can it be a physician?

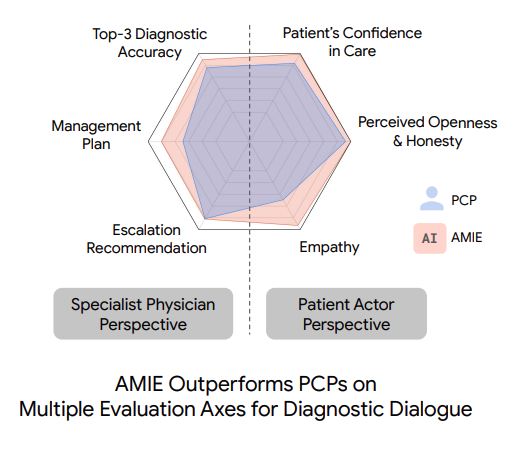

In the end, researchers found that AMIE “matched or surpassed the physicians’ diagnostic accuracy in all six medical specialties considered.”

“The bot outperformed physicians in 24 of 26 criteria for conversation quality, including politeness, explaining the condition and treatment, coming across as honest, and expressing care and commitment,” wrote the paper’s authors.

Does this mean an AI chatbot is more empathetic than doctors now when speaking to patients? Does this mean that we can expect our next GP appointment to be led by an AI chatbot?

No, says Google Health clinical scientist Alan Karthikesalingam. He says the study “in no way means that a language model is better than doctors in taking clinical history.”

It is also worth keeping in mind that AMIE, like many other AI chatbots, operate via algorithms and thus are devoid of any human emotion. “The best we can ever aim for is a fairly good replication of prescribed compassionate behaviour,” Rebecca Johnson, doctoral researcher on tech ethics and generative AI at the University of Sydney and founder of EthicsGenAI.com, tells The Chainsaw.

“Algorithms have no inherent ability to be empathetic, compassionate, or any other human skill like that. They are algorithms, not living beings,” she adds.

In addition, there’s also the problem of potential racial or gender bias in AMIE which needs to be addressed, the researchers said.

So, don’t worry – AI chatbots aren’t quite ready to face real patients yet.