Be careful what you believe about major brands on TikTok, because a latest analysis by NewsGuard found that the platform is flooded with malicious misinformation about brands. Those that have fallen victim to this include Balenciaga, Barilla, Bud Light, Chick-fil-A, Hobby Lobby, Kohl’s, and Target.

NewsGuard analysed 520 TikTok videos and found out that 73 of them – or 14% – contained “false, misleading or unsubstantiated claims” targeting well-known brands. Those videos clocked a total of 52 million views, but nearly half, or 26 million, were for “videos that used AI-generated or otherwise manipulated media to advance misinformation.”

AI-generated TikToks go viral

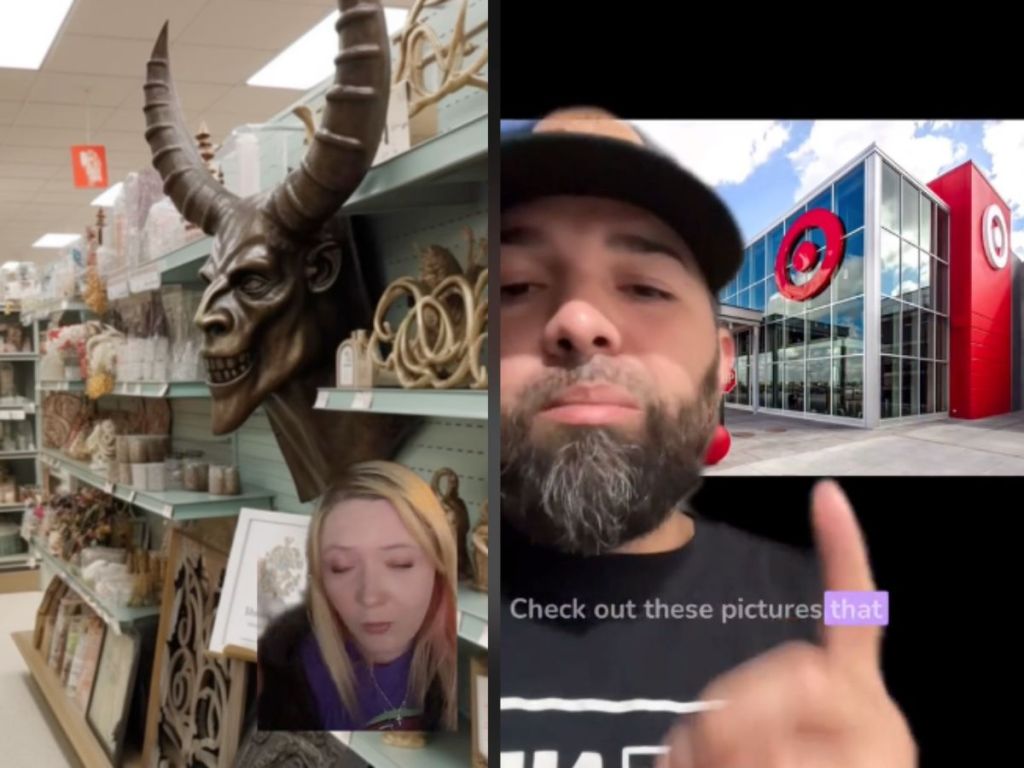

One example of AI-generated misinformation on the short video platform, according to NewsGuard, is a TikTok claiming that Target is selling “satanic clothing.”

The video, uploaded by Christian influencer King Joshua, falsely claims that the major US retailer is pushing an “evil agenda” by selling clothing with satanic imagery. In the video, he included AI-generated photos of adults and kids in said clothing, as well as photos of Target selling satanic merchandise in stores.

The video has accumulated over 1.4 million views and 78,000 likes at the time of writing.

Next, a TikTok video about BudLight that contained “digitally manipulated” elements falsely claimed that the beverage brand purchased a billboard ad to hit back at criticism over its partnership with trans star Dylan Mulvaney. The video racked up over 1.4 million views on the platform but was then deleted.

Boycotting thanks to misinformation

One outcome of such AI-generated misinformation on TikTok is the boycotting of brands, reports NewsGuard. For example, entering brand names like “Target” on TikTok’s search bar returns with “Target boycott 2023” as a suggested term.

Why is TikTok struggling — or perhaps in some cases, unwilling — to take down such rampant misinformation on its platform?

“The difficult thing about spotting AI-generated content is it can often be very realistic and convincing… When you create an image or video with AI tools, there’s usually no watermark or way of telling that this is a ‘fake’ piece of content. This will inevitably lead to the rise of misinformation, which is in my view, one of the biggest dangers of this new technology,” Jamie Sissons, founder of Sydney-based Absolutely.AI, tells The Chainsaw.

“Loneliness, to create something that will go viral, general anger towards society” are some of the reasons why users on the internet would be encouraged to create and spread fabricated videos, says Sissons.

“This is not new, people have been sharing awful things on social media since it began. The difference is now that the average person has the power to create more convincing and more realistic ‘fake’ content very easily,” he adds.